Introduction

Modern AI applications such as semantic search, recommendation systems, and intelligent chatbots need to understand the meaning of text, not just match keywords.

Traditional search systems rely on exact word matching, which often produces poor results.

Companies like OpenAI and Google solve this problem using Vector Embeddings.

In this article, we will understand:

- The problem with keyword search

- How vector embeddings solve it

- A practical example

- Python code implementation

- System diagrams

Problem

- Consider a simple search system.

User Query

car repairDocuments in the system

1. automobile maintenance guide

2. how to fix a bike

3. cooking recipesA traditional keyword search system may not return document 1, because the word car is not present.

But humans understand that:

car ≈ automobileThis is the semantic understanding problem.

The Solution: Vector Embeddings

Vector embeddings convert text into numerical vectors that represent meaning.

Example:

car → [0.21, -0.78, 0.44, ...]

automobile → [0.19, -0.80, 0.40, ...]

bike → [-0.55, 0.33, -0.91, ...]Notice that:

car vector ≈ automobile vectorThis allows AI systems to measure similarity between meanings.

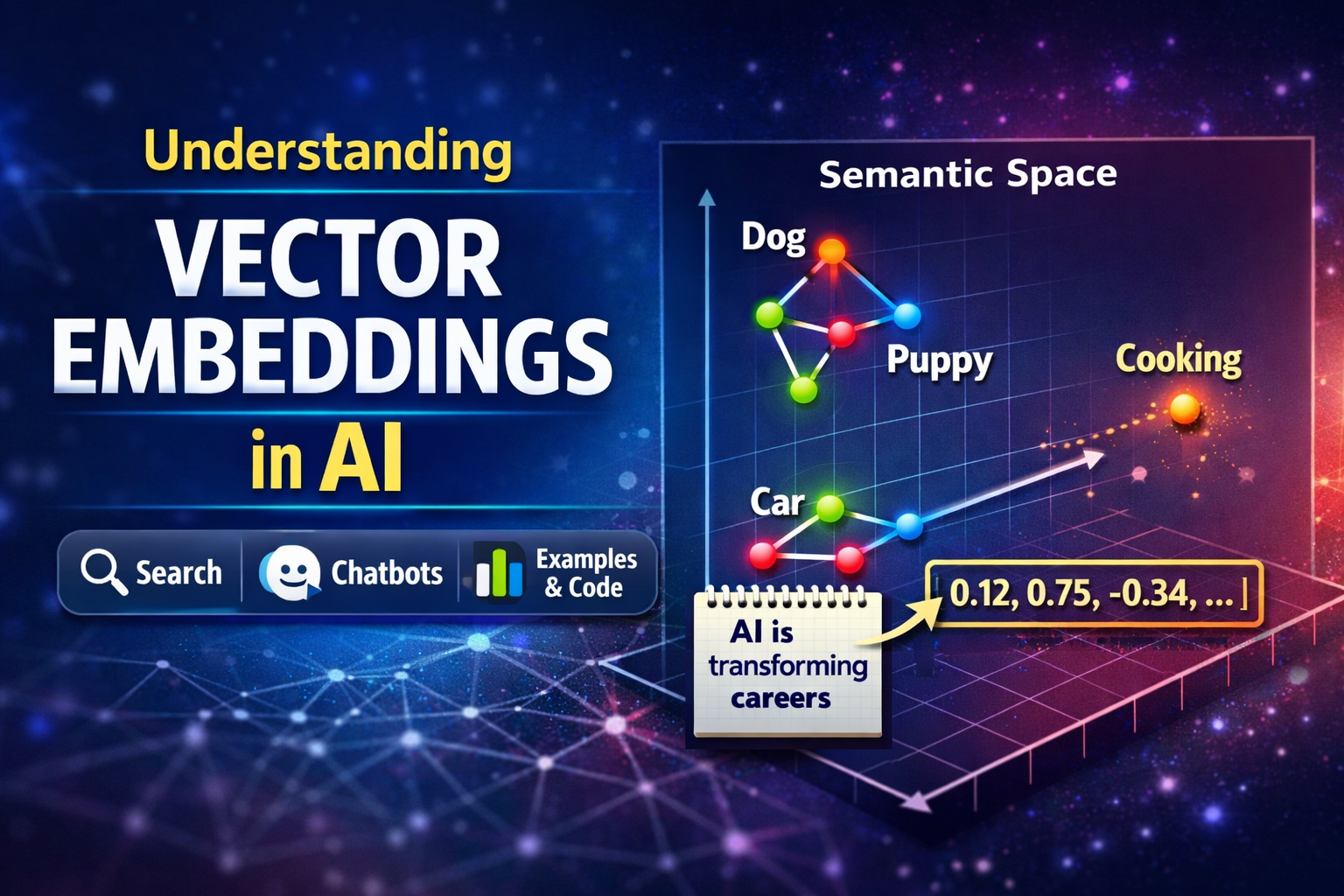

Diagram 1 – Semantic Vector Space

animal

|

dog ------ puppy

|

|

vehicle

car -------- automobile

|

truckWords with similar meanings are closer together in vector space.

Example

Sentences

Sentence A: I love programming

Sentence B: Coding is my passion

Sentence C: The weather is hot todaySimilarity

| Sentence Pair | Similarity |

| ------------- | ---------- |

| A vs B | High |

| A vs C | Low |

The AI system understands that programming ≈ coding.

Diagram 2 – AI Search Architecture

User Query

|

v

Convert Query to Embedding

|

v

Vector Database

(search similar vectors)

|

v

Retrieve Relevant Documents

|

v

Send Context to LLM

|

v

Generate Intelligent AnswerThis architecture is commonly used in AI assistants and RAG systems.

Python Code Example

Below is a simple example using embeddings from OpenAI.

from openai import OpenAI

client = OpenAI()

text = "AI is transforming careers"

response = client.embeddings.create(

model="text-embedding-3-small",

input=text

)

embedding = response.data[0].embedding

print("Vector length:", len(embedding))

print("First 10 numbers:", embedding[:10])Example Output

Vector length: 1536

First 10 numbers: [0.012, -0.342, 0.921, ...]This vector represents the semantic meaning of the text.

Where Vector Embeddings Are Used

Vector embeddings are widely used in modern AI systems.

Semantic Search

Search based on meaning instead of keywords.

Chatbots

AI assistants retrieve relevant knowledge.

Recommendation Systems

Suggest similar products or movies.

Document Clustering

Group similar documents automatically.

Vector databases used for this include:

- Pinecone

- FAISS

- Weaviate

Key Takeaway

Vector embeddings allow AI systems to move from:

Keyword Matchingto

Semantic UnderstandingThis is one of the foundational technologies behind modern AI applications.

Conclusion

Vector embeddings are a powerful technique that enable machines to understand relationships between words, sentences, and documents.

They play a critical role in:

- AI search engines

- Intelligent chatbots

- Recommendation systems

- Retrieval Augmented Generation (RAG)

Understanding embeddings is an important step for anyone building modern AI applications.